The four foundations that determine whether enterprise AI creates value or amplifies chaos.

Enterprise organisations are adopting AI assistants at accelerating pace. Microsoft 365 Copilot, agents built on various AI platforms, custom RAG pipelines — the technology is moving fast, and the pressure to deploy is real. Gartner estimates that 80% of enterprises will have deployed generative AI applications by the end of 2026, up from less than 5% just three years ago.

But a consistent pattern is emerging from the organisations that have gone first: disappointment.

Not because the AI models are bad. Not because the technology doesn’t work. The models are extraordinary. The technology is genuinely impressive. The disappointment comes from something nobody talked about during the sales pitch:

AI amplifies whatever it finds.

If your organisational content is well-structured, properly governed, and high quality, AI produces trustworthy, useful outputs. If your content is messy, unsecured, duplicated, and poorly organised, AI faithfully amplifies that mess. It does so faster, more confidently, and at a scale that no human ever could.

This is not a bug. It is the fundamental architecture of how retrieval-augmented generation works. The AI searches your content, retrieves the most “relevant” results, and synthesises an answer. If your most “relevant” content includes a policy that was superseded two years ago, a draft that was never approved, and three conflicting versions of the same procedure — well, the AI will synthesise all of that into a single, confident, wrong answer.

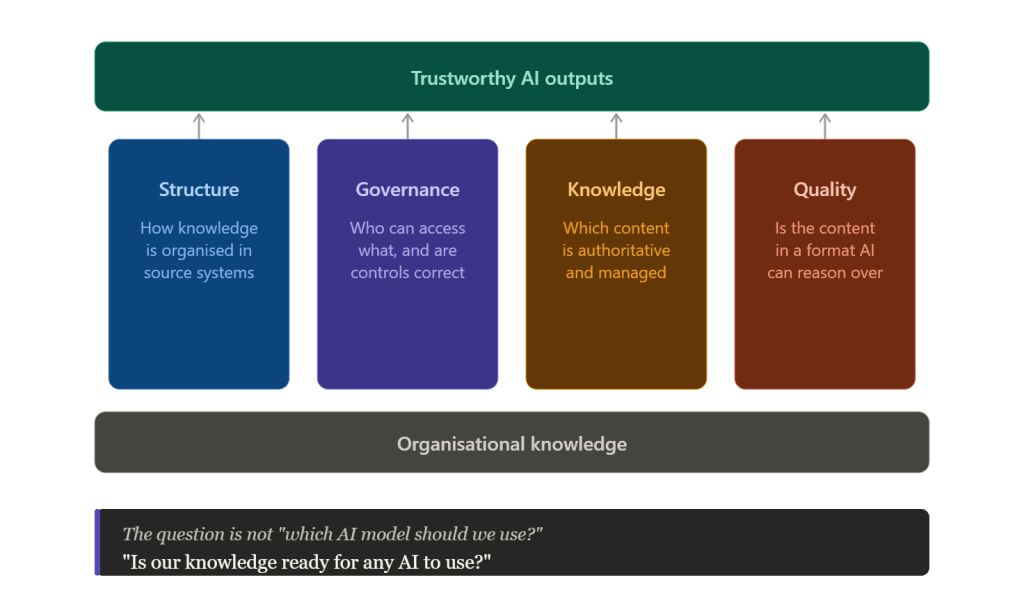

The Four Foundations

The AI model itself is increasingly a commodity. The differentiator — the thing that separates enterprises that extract real value from AI and those that don’t — is the state of their organisational knowledge. Specifically, four foundations:

1. Structure

How is your knowledge organised in source systems? The hierarchy of sites, folders, and repositories. The information architecture. The naming conventions. The relationship between different repositories.

AI systems navigate your content through these structures. If your SharePoint has 300 sites with no clear hierarchy, no consistent naming, and abandoned repositories mixed in with active ones, the AI has no way to distinguish signal from noise. It treats a folder called “Old Stuff — DO NOT USE” the same as your current compliance library. It does not read folder names with human judgment. It reads them with retrieval algorithms.

The structural question is: can an AI system navigate your content and understand what belongs where?

2. Governance

Who can access what? Permissions, sharing settings, external exposure, sensitivity alignment.

This is where things get dangerous. AI systems surface content through your existing access controls. If those controls are wrong — and in most enterprises, they are at least partially wrong — AI surfaces the wrong content to the wrong people. That board-level strategy document that was shared with “everyone except external users” three years ago? AI will happily include it in a summary for a summer intern who asked about company strategy.

The governance question is: will AI surface the right content to the right people?

3. Knowledge

Which content is authoritative? Do you have designated knowledge bases? Clear ownership? Lifecycle management? Archival rules?

Without this, AI treats every document as equally valid. The approved, current, authoritative version of a policy carries the same weight as the draft that someone saved to their personal OneDrive eighteen months ago and forgot about. The employee handbook from 2019 is just as “relevant” as the one updated last quarter. The AI has no concept of authority — it has relevance scoring, and relevance scoring does not know which document the compliance team actually endorsed.

The knowledge question is: does your organisation know which content is authoritative, and is that content properly managed?

4. Quality

The content itself. Duplicates and near-duplicates. Scanned PDFs without text layers that AI cannot read. Documents with no headings, no metadata, no author information. Conflicting versions of the same asset.

Industry shorthand for this problem is ROT: Redundant, Outdated, and Trivial content. In most enterprise environments, ROT accounts for somewhere between 30% and 60% of all content. That means when AI searches your knowledge base, roughly half of what it finds is noise. Not malicious noise — just the accumulated sediment of years of content creation without lifecycle management.

The quality question is: is your content in a format that AI can reliably reason over?

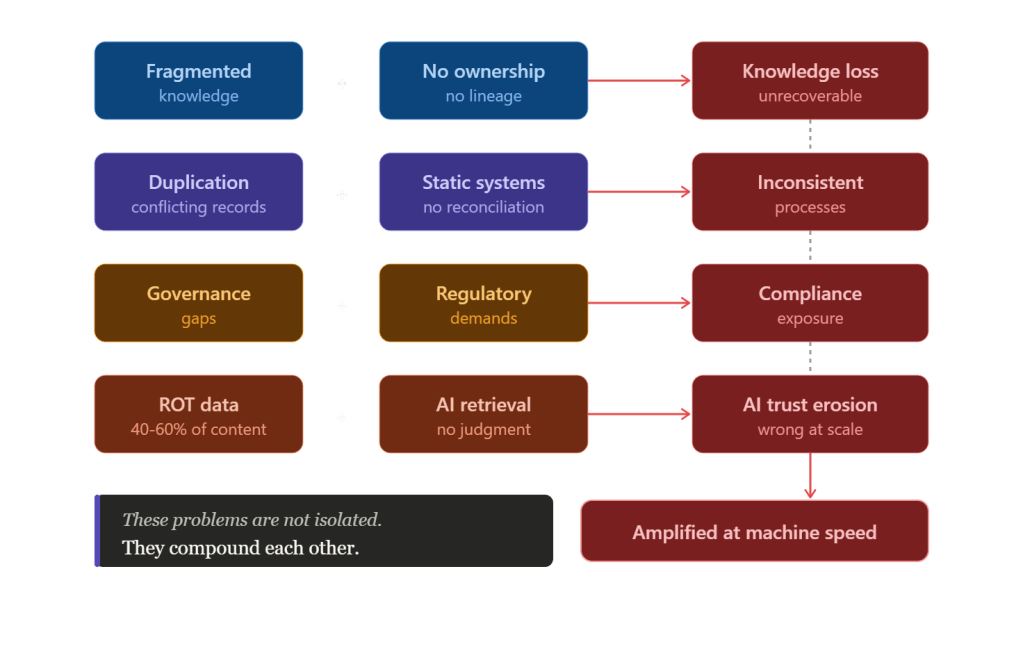

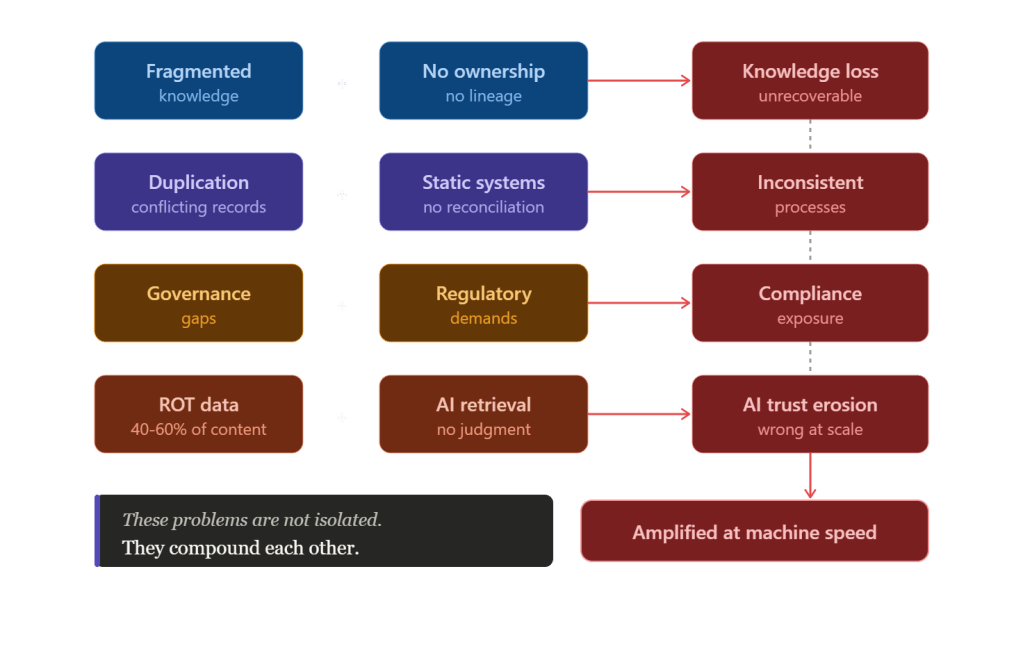

The Compounding Problem

These four foundations are not independent. They compound each other in ways that make the problem worse than any single dimension suggests.

Fragmented knowledge combined with unclear ownership means that when people leave or teams reorganise, valuable knowledge disappears. Nobody knows it existed, nobody knows who was responsible for it, and there is no lineage to trace back to the source. The knowledge is gone.

Duplication combined with static knowledge systems means different teams follow different versions of the same process — and nobody knows there is a discrepancy until something goes wrong. An auditor asks which version was in effect on a specific date. Silence.

ROT data combined with AI means the AI is operating on a knowledge base where nearly half the content should have been archived or deleted years ago. Every query is polluted with noise. Every answer carries the risk of being grounded in something that is no longer true.

And the most dangerous compounding effect: all of the above, amplified at machine speed. A human searching through SharePoint might notice that a document looks old. They might check with a colleague. They might apply judgment. AI does none of this. It retrieves, it synthesises, it presents — confidently, instantly, and at scale.

The Real Question

The conversation about enterprise AI is almost entirely focused on models, agents, and tooling. Which model is best? Which agent framework should we use? How do we build RAG pipelines?

These are the wrong questions. Or rather, they are the right questions asked too early.

The question that matters — the one that determines whether your AI investment creates value or creates liability — is simpler and harder:

Is your organisation’s knowledge ready for any AI to use?

Not ready for a specific AI. Not ready for Copilot. Ready for any AI system that will operate on your organisational knowledge, now and in the future. Because the model is a commodity. The knowledge is not.

The enterprises that get this right will extract durable, compounding value from AI. The ones that skip straight to deployment will spend the next three years explaining to their boards why the AI keeps citing documents from 2017.

Where to Start

If this resonates, here are three things you can do this week:

- Audit your permissions. Pick one SharePoint site. Check who actually has access. Check whether sharing links have been created that bypass your intended access model. In most organisations, this exercise alone surfaces surprises.

- Search for a common term. Pick a term like “remote work policy” or “expense procedure” and search across your tenant. Count how many versions you find. Check the dates. Check whether they agree with each other. This is what AI sees when it searches for the same term.

- Ask an uncomfortable question. In your next leadership meeting, ask: “What percentage of our content is redundant, outdated, or trivial?”. If nobody has an answer, that is your answer.

I’ll be writing more about each of these foundations over the coming weeks. The knowledge readiness problem is deeper than most people realise, and it does not get easier by ignoring it.